Blog

Articles about computational science and data science, neuroscience, and open source solutions. Personal stories are filed under Weekend Stories. Browse all topics here. All posts are CC BY-NC-SA licensed unless otherwise stated. Feel free to share, remix, and adapt the content as long as you give appropriate credit and distribute your contributions under the same license.

tags · RSS · Mastodon · simple view · page 2/19

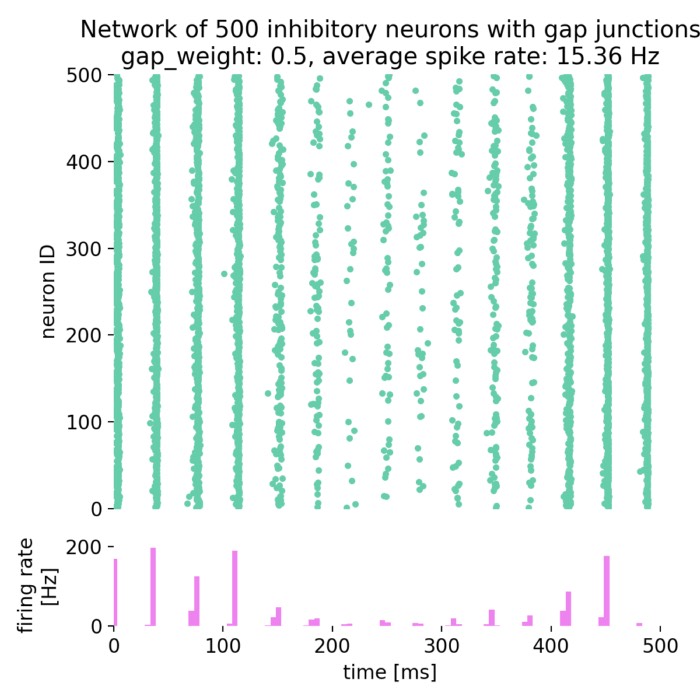

On the role of gap junctions in neural modelling: Network example

As a follow-up to our previous post on gap junctions, we will now explore how gap junctions can be implemented in a network of spiking neurons (SNN) using the NEST simulator. Gap junctions are electrical synapses that allow direct electrical communication between neurons, which can significantly influence the dynamics of neural networks.

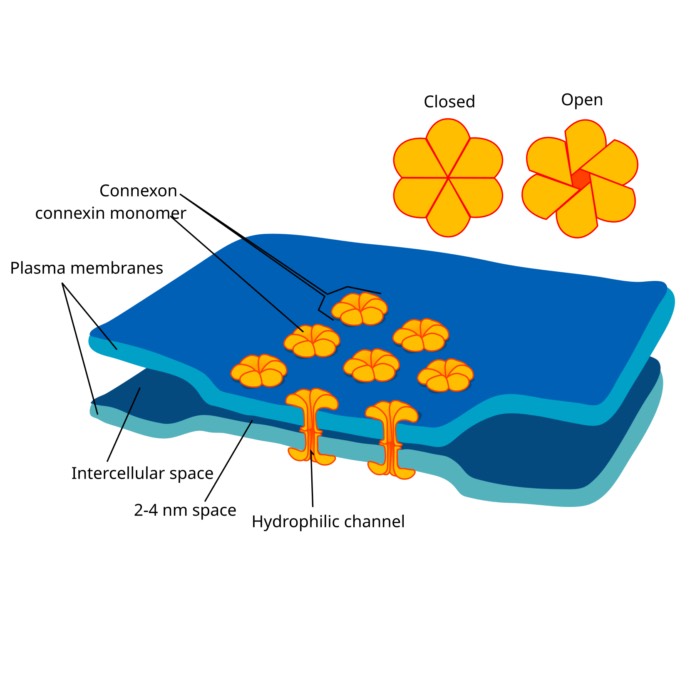

On the role of gap junctions in neural modelling

Gap junctions are specialized intercellular connections that facilitate direct electrical and chemical communication between neurons. Unlike synaptic transmission, which involves neurotransmitter release, gap junctions enable the direct passage of ions and small molecules through channels formed by connexins, leading to synchronized neuronal activity. In computational neuroscience, modeling gap junctions is crucial for understanding their role in neural network dynamics, synchronization, and various brain functions.

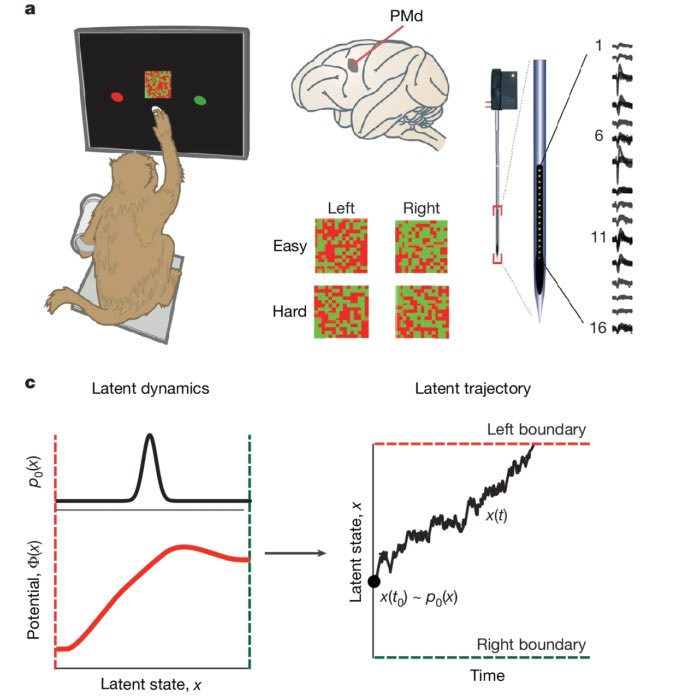

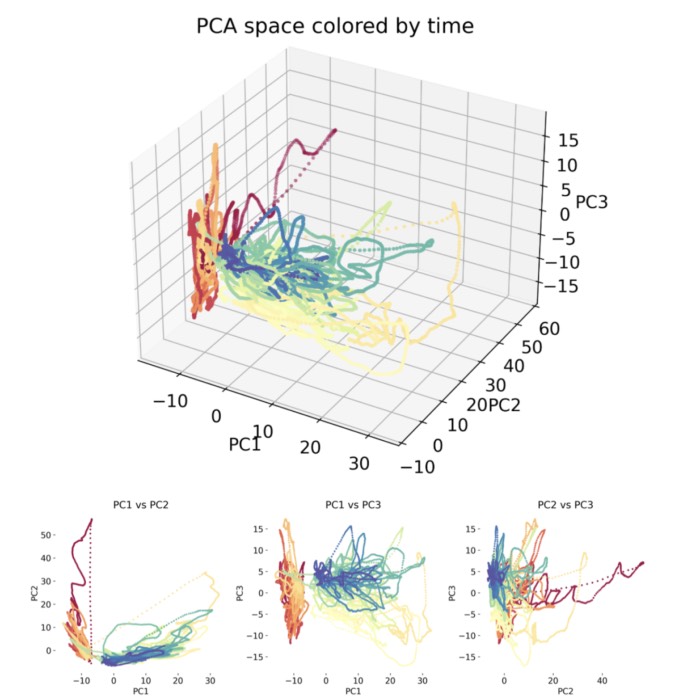

Shared dynamics, diverse responses: decoding decision-making in premotor cortex

Last week, I presented a recent study by Genkin et al., The dynamics and geometry of choice in the premotor cortex, in our Journal Club, and I found it conceptually compelling enough to summarize it here as well. The paper explores how perceptual decisions are encoded not in isolated neural responses but in population-wide latent dynamics. Traditionally, the neuroscience of decision-making has focused on ramping activity in individual neurons, heterogeneous peristimulus time histograms (PSTHs), and decoding strategies based on trial-averaged firing rates. In contrast, this work proposes that a shared low-dimensional decision variable evolves over time and explains the observed diversity in single-neuron responses. In our discussion, we focused on the paper’s central figures, using them to guide a step-by-step reconstruction of the study’s logic and findings.

Reflections on joining Bluesky: Opportunities and risks for the scientific community

Over the past two weeks, I have taken a closer look at Bluesky, exploring its features and dynamics through the lens of my own experience within the neuroscience community. So far, my impressions have been mixed: while some aspects of Bluesky are genuinely promising, others evoke certain concerns about the future of scientific communities on digital platforms. In this post, I would like to share a personal reflection on this recent shift, situating it within the broader context of academic social media migration.

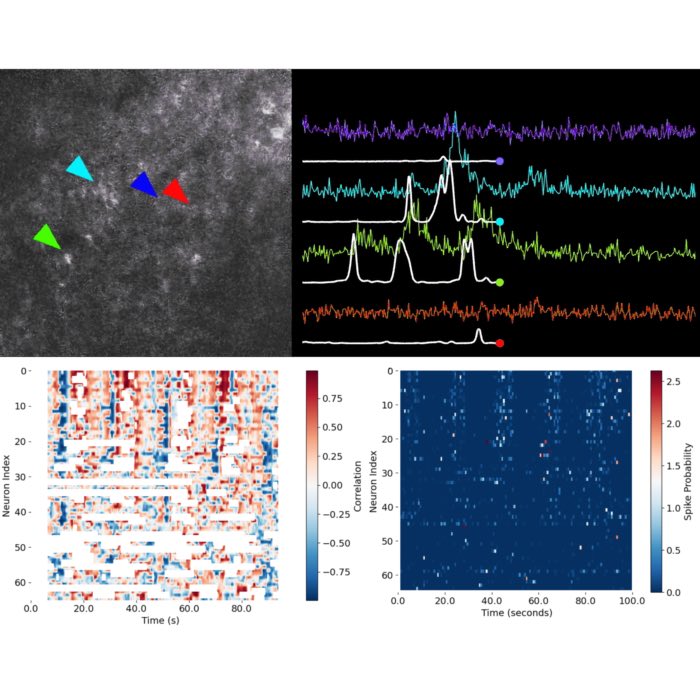

New teaching material: Functional imaging data analysis – From calcium imaging to network dynamics

We have just completed our new course, Functional Imaging Data Analysis: From Calcium Imaging to Network Dynamics, for the first time as part of the Master of Neuroscience program. This course is now available freely online under ‘Teaching’, alongside my other open educational materials. All resources were created with a strict focus on open content and reproducibility, so that anyone interested can make full use of the lectures, figures, and example code without copyright concerns. Feel free to use and share it.

Miniforge: The minimal, open solution for institutional Python environments

In response to recent licensing changes by Anaconda, Inc., Miniforge has emerged as the recommended minimal installer for Python and scientific computing in academic and research institutions. Now bundling both conda and mamba, Miniforge replaces Miniconda and Mambaforge, ensuring high performance and broad package access via the conda-forge ecosystem. In this post, we briefly demonstrate how to install and migrate to Miniforge, along with troubleshooting tips for common issues in institutional settings.

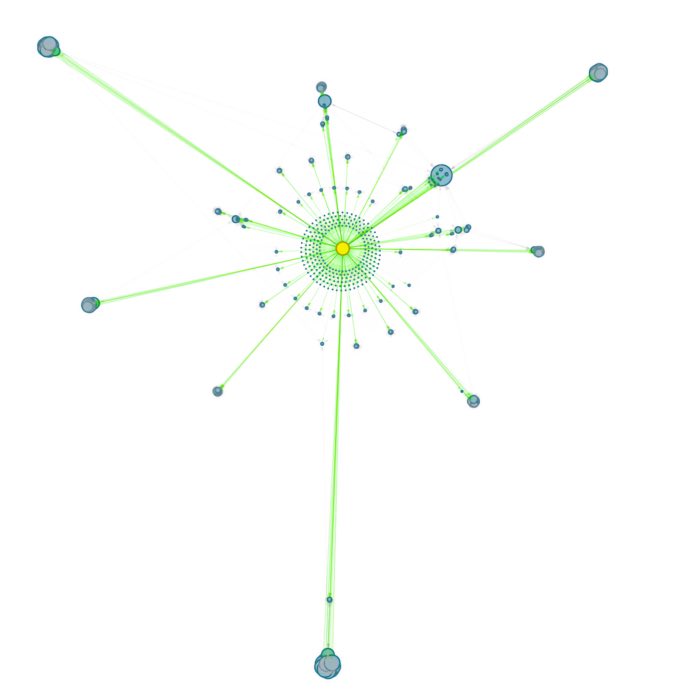

Exploring connected notes: Local graph views for DEVONthink knowledge bases

DEVONthink has long been my preferred tool for managing personal knowledge. Its smart features, like automatic Wiki-links and AI-based classification, make it ideal for organizing interconnected notes. But these links often stay invisible without a visual layer. To address this, I developed a tool that generates interactive local graphs, embedded directly into each note. These graphs reveal how notes connect, highlighting clusters and patterns that text alone might miss. Fully local and integrated into DEVONthink 3, the system offers a lightweight way to navigate Markdown notes spatially. After refining it for my own workflow, I’m now sharing it for others who might benefit from clearer structure and better insight into their knowledge base.

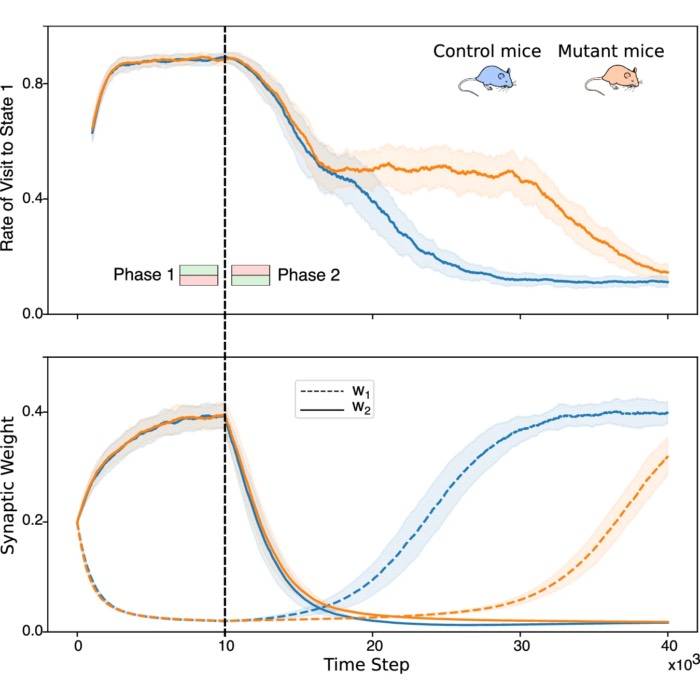

Astrocytes enhance plasticity response during reversal learning

Astrocytes, a type of glial cell traditionally considered support cells in the brain, are now recognized as active participants in synaptic plasticity and memory. I found this development particularly compelling and presented the following study in our Journal Club. The paper by Squadrani et al. (2024) explores the role of astrocyte-mediated D-serine regulation in modulating learning flexibility, particularly during reversal learning — the ability to adapt to changes in the environment. The work builds on prior experiments by Bohmbach et al. (2022), which identified an astrocyte-neuron feedback loop involving endocannabinoids and astrocytic D-serine release in the hippocampus.

New teaching material: Dimensionality reduction in neuroscience

We just completed a new two-day course on Dimensionality Reduction in Neuroscience, and I am pleased to announce that the full teaching material is now freely available under a Creative Commons (CC BY 4.0) license. This course is designed to provide an introductory overview of the application of dimensionality reduction techniques for neuroscientists and data scientists alike, focusing on how to handle the increasingly high-dimensional datasets generated by modern neuroscience research.

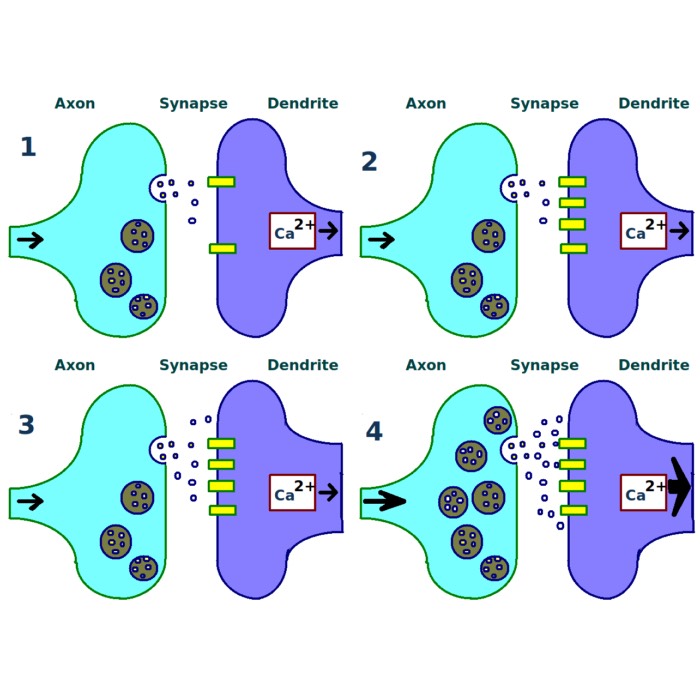

Long-term potentiation (LTP) and long-term depression (LTD)

Both long-term potentiation (LTP) and long-term depression (LTD) are forms of synaptic plasticity, which refers to the ability of synapses to change their strength over time. These processes are crucial for learning and memory, as they allow the brain to adapt to new information and experiences. Since we are often talking about both processes in the context of computational neuroscience, I thought it would be useful to provide a brief overview of biological mechanisms underlying these processes and their significance in the brain.